Operational reporting focuses on real-time data to answer the question: "What’s happening right now?" Unlike analytical reporting, which analyzes historical trends, operational reporting delivers up-to-the-minute insights to support immediate decisions. Modern Operational Data Warehouses (ODWs) enable this by streaming and processing data as events occur, making them essential for scenarios like fraud detection or inventory management.

Key highlights from the article:

- Purpose: Tracks real-time data for immediate actions (e.g., rerouting shipments, reallocating staff).

- Types of Reports:

- Real-time monitoring (e.g., equipment or labor metrics).

- Resource planning (e.g., staffing or delivery schedules).

- Forecasting future needs.

- Trend analysis for predicting failures.

- Operational Data Store (ODS): Acts as a dynamic, real-time snapshot of data for tactical decisions.

- ODW Features:

- Continuous data updates for real-time queries.

- Integration with systems using tools like Change Data Capture (CDC).

- ETL vs ELT processes for faster data preparation.

These systems are transforming how businesses handle real-time reporting, enabling faster decisions and improving efficiency across industries. This is particularly vital when optimizing a business administration and information system for growth.

What Is An Operational Data Store (ODS)? - Emerging Tech Insider

sbb-itb-d1a6c90

Types of Operational Reports in Data Warehouses

Data warehouses provide specialized operational reports that deliver detailed, real-time data to support immediate decision-making. Unlike long-term strategic reports, these focus on short-term timeframes - from real-time updates to daily or weekly summaries. Their goal is to drive specific actions, whether it’s reallocating staff, managing inventory, or rerouting shipments. These reports are often customized for various departments - logistics might track delivery times, manufacturing could monitor machinery performance, and HR might oversee absenteeism. Here’s a closer look at some of the key operational report types that enable swift, informed decisions.

Real-Time Equipment and Labor Monitoring

Real-time monitoring reports are a prime example of the immediacy needed for operational decisions. These reports provide continuous updates on workforce and equipment metrics. For instance, they can track technician locations, ticket resolution times, and staffing levels, helping managers allocate resources to meet demand. On the equipment side, telemetry data - like GPS tracking for delivery trucks or warehouse inventory sensors - offers instant insights into asset performance and location.

A great example comes from Philz Coffee, which, in January 2025, combined its in-store and mobile systems into a single dashboard using Domo. This allowed store managers to monitor hourly sales, mobile orders, and shift performance in real time. The result? Over 16 hours of manual reporting eliminated each month and a more consistent customer experience. As their Operations Director explained:

"With Domo, our team isn't just reacting - we're planning and adjusting in the moment."

Advanced systems now go a step further, triggering semi-automated responses based on real-time data. These systems can activate external APIs to dispatch technicians or alert users to exceptions as they happen, removing the need for manual intervention. Real-time ingestion engines, capable of processing millions of events per hour, make this level of responsiveness achievable. This immediacy is crucial for keeping operations smooth and responsive.

Resource and Schedule Planning

Resource and schedule planning reports are designed to optimize staffing and equipment usage. They analyze current data to answer practical questions like: Do we have enough staff for tomorrow’s expected workload? Which delivery trucks can handle the afternoon route?

DHL offers a compelling example. By implementing real-time dashboards, they eliminated a seven-day delay in processing ambient temperature data, which helped protect sensitive shipments and increased supply chain transparency. Their Global Analytics Lead summed it up:

"Domo gave us eyes on our supply chain like never before."

Retailers also benefit from such systems. For example, 7-Eleven Vietnam used an integrated platform to connect data from inventory, point-of-sale, and supply systems. This enabled real-time tracking of product-level sales and supplier delivery schedules, reducing stockouts, improving availability, and scaling store performance management using the best business administration tools.

Resource Availability Forecasting

Forecasting reports combine operational and historical data to predict future resource needs. These reports can trigger alerts when thresholds are reached, allowing teams to make proactive adjustments.

Historical Trend Analysis for Reliability

Historical trend analysis digs into long-term data to uncover patterns and predict potential failures. This type of reporting, sometimes called "predictive operational intelligence", uses historical sensor data to forecast issues like equipment breakdowns. For example, a manufacturing plant might analyze vibration data from motors to predict when a bearing is likely to fail, allowing maintenance to be scheduled during planned downtime.

Data warehouses play a key role here by preserving historical context through Type 2 Slowly Changing Dimensions (SCDs), which maintain a complete history of changes in dimension attributes for accurate long-term reporting.

The demand for predictive capabilities is fueling growth in the global data warehousing market, which is projected to reach $51.18 billion by 2028. Modern warehouses are increasingly incorporating machine learning models to automate anomaly detection and pattern recognition, helping organizations shift from reactive to proactive management.

Operational Data Store (ODS) in Real-Time Reporting

Data warehouses are fantastic for long-term analysis, but they often fall short when it comes to making immediate, tactical decisions. That’s where an Operational Data Store (ODS) steps in. An ODS serves as a central database, offering a snapshot of the most up-to-date data from multiple transactional systems. It combines this information in its original format, making it perfect for real-time business reporting. Essentially, it acts as a bridge between live operational systems and the data warehouse, enabling real-time or near real-time decision-making. Unlike data warehouses, which focus on storing historical, summarized data, an ODS is highly dynamic - it constantly overwrites data to reflect the latest transactions and metrics as they happen. This makes it a go-to solution for questions like, “What’s the current status of this customer’s order?” or “Which products sold in the last hour?”

To make this happen, data is integrated from various sources using tools like data virtualization, data federation, or ETL processes. As part of this integration, the data is cleansed, deduplicated, and validated against business rules to ensure its accuracy and reliability.

The growing demand for real-time reporting highlights the importance of ODS systems. The real-time data warehousing market, for example, is expected to grow significantly - from $11.7 billion in 2024 to $23.6 billion by 2033, with an annual growth rate of 7.3%. Businesses are increasingly turning to low-code integration platforms that synchronize data from over 100 sources in real time. Technologies like Apache Kafka and Apache Flink are also gaining traction, as they support continuous data ingestion instead of relying on traditional batch processing.

A great example of the impact of modern data architecture is AMN Healthcare, which achieved a 93% reduction in data lake costs by using platforms like Snowflake. For organizations looking to implement an ODS, it’s wise to focus on high-value data assets like customer or product information. An ODS can also act as a staging area for raw data before transformation, simplifying debugging and making process restarts easier. Adding automated data quality checks to the ingestion pipeline ensures that flawed data never makes it into the system.

Operational Data Warehouse (ODW) Features and Processes

An Operational Data Warehouse (ODW) stands out by continuously processing data, acting as a high-powered system for real-time operations. Unlike traditional analytical warehouses that rely on batch processing at set intervals - like hourly or daily - an ODW streams and ingests data as events occur across your organization. This real-time capability makes ODWs ideal for immediate decision-making, setting them apart from Operational Data Stores (ODS), which primarily focus on capturing current snapshots.

Real-Time Processing and Query Optimization

ODWs take the functionality of an ODS to the next level by optimizing data for real-time insights. They automatically update materialized views and indexes as data changes, ensuring that dashboards and reports always display the most up-to-date information without requiring manual updates. As Andrew Yeung, Sr. Director of Product Marketing at Materialize, puts it:

"An operational data warehouse receives data as events happen, and can transform, normalize, and enrich the data as it lands".

This system simplifies the complexities of streaming data, allowing analysts to use familiar SQL for business logic. This accessibility eliminates the need for specialized streaming expertise, making it easier for teams to leverage the ODW effectively.

Integration with Other Systems

ODWs serve as a critical bridge between source systems and enterprise data warehouses, processing fresh data before it's moved into long-term storage. They handle "hybrid data", which includes a mix of formats such as IoT data, geocoding, events, images, audio, and even machine learning training sets. Integration is achieved through high-speed methods like Change Data Capture (CDC) via transaction logs, materialized views, and file system mirroring.

Modern ODWs are versatile, operating across on-premises and cloud environments - whether public, private, or multicloud. They also support a wide range of access methods, including SQL, R, Scala, and graphical interfaces, catering to users with varying skill levels. As Philip Russom, Author/Analyst at TDWI, explains:

"The modern ODW delivers insights from a hybrid data architecture quickly enough to impact operational business decisions".

ELT Processes for Data Preparation

ODWs have revolutionized data preparation by shifting from traditional ETL (Extract, Transform, Load) processes to ELT (Extract, Load, Transform). With ELT, raw data is loaded into the system first and then transformed in place. This approach supports high-volume, real-time analysis by cleaning, scrubbing, and validating data as it arrives. The result is a dependable "single source of truth" that’s ready for operational decisions, such as fraud detection or dynamic pricing.

ODWs also resolve redundancies and enrich data continuously, ensuring it's always ready for immediate use. For organizations frequently re-running queries to access the latest state of rapidly changing data, moving those workloads to an ODW can be a game-changer. For example, AMN Healthcare reported a 93% reduction in data lake costs after incorporating Snowflake's modern data platform into their operational data strategy.

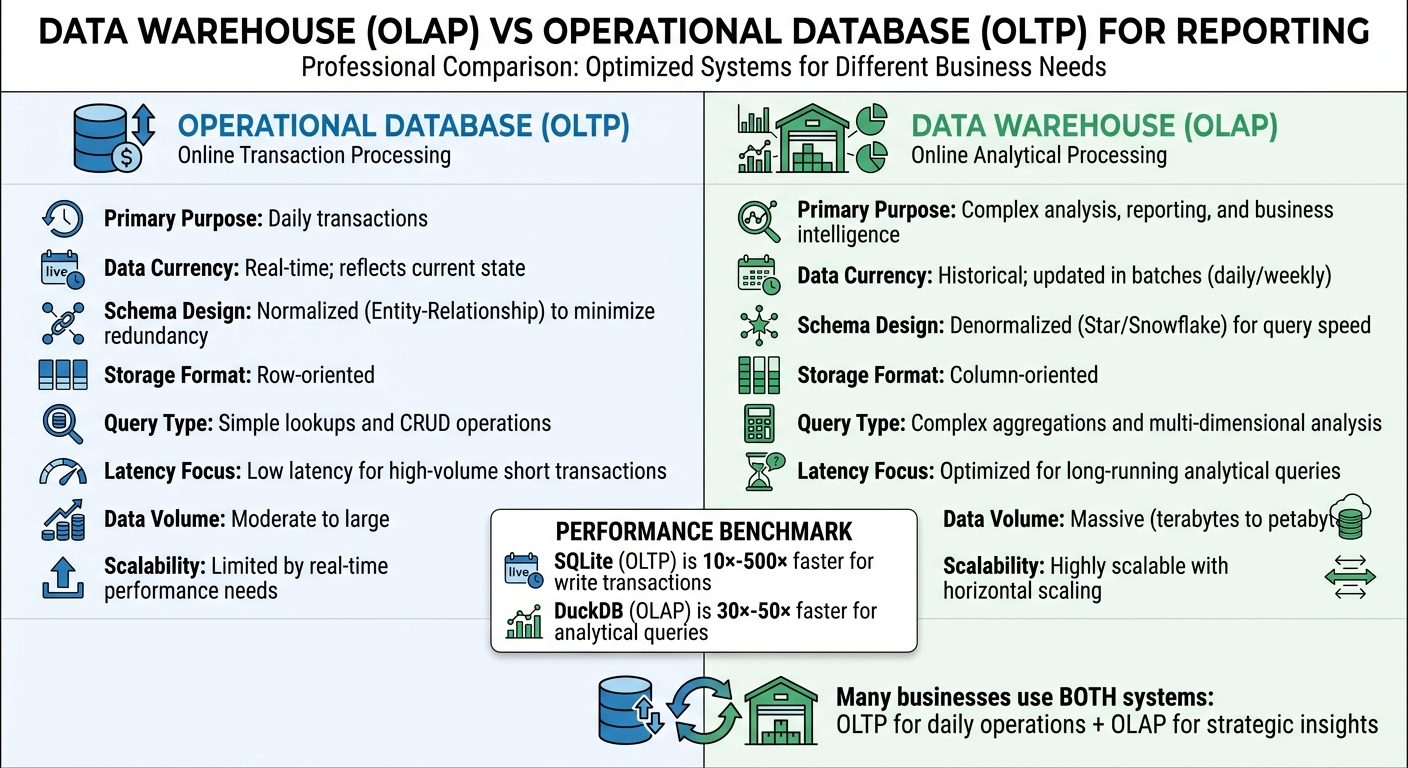

Data Warehouse vs. Operational Database for Reporting

Data Warehouse vs Operational Database: Key Differences for Reporting

When it comes to reporting, data warehouses and operational databases serve very different purposes. Operational databases, often referred to as OLTP systems, are designed to manage day-to-day business transactions. Think of tasks like processing orders, updating inventory, or managing customer accounts. These systems focus on real-time accuracy and fast processing speeds. On the other hand, data warehouses, known as OLAP systems, are built for analytical reporting. They store cleaned and consolidated historical data, making them ideal for complex analyses.

Performance benchmarks highlight the distinct strengths of these systems. For example, tests comparing SQLite (optimized for OLTP) with DuckDB (optimized for OLAP) revealed that SQLite was 10× to 500× faster for write transactions. However, DuckDB outperformed SQLite by 30× to 50× when running analytical queries based on the Star Schema Benchmark. This performance difference stems from their storage designs: operational databases use row-oriented storage to quickly access all fields of a single record, while data warehouses rely on column-oriented storage, which is highly efficient for aggregating data across millions of records.

Many businesses use both systems to meet their varied reporting needs. Operational databases provide real-time data access for employees handling daily tasks, while data warehouses enable analysts and executives to explore trends and patterns over time. To bridge the gap, ELT tools are commonly used to sync transactional data into the data warehouse, ensuring that businesses can balance immediate operations with long-term strategic insights.

Comparison Table: Data Warehouse vs. Operational Database

| Feature | Operational Database (OLTP) | Data Warehouse (OLAP) |

|---|---|---|

| Primary Purpose | Daily transactions | Complex analysis, reporting, and business intelligence |

| Data Currency | Real-time; reflects current state | Historical; updated in batches (daily/weekly) |

| Schema Design | Normalized (Entity-Relationship) to minimize redundancy | Denormalized (Star/Snowflake) for query speed |

| Storage Format | Row-oriented | Column-oriented |

| Query Type | Simple lookups and CRUD operations | Complex aggregations and multi-dimensional analysis |

| Latency Focus | Low latency for high-volume short transactions | Optimized for long-running analytical queries |

| Data Volume | Moderate to large | Massive (terabytes to petabytes) |

| Scalability | Limited by real-time performance needs | Highly scalable with horizontal scaling |

Best Practices for Operational Reporting in Data Warehouses

Automated Batch Processes for Efficiency

Automated batch processes are a cornerstone of modern operational reporting, enabling smoother workflows and saving time. By leveraging metadata-driven architectures, these processes automatically extract and load data from source tables, columns, and relationships, replacing fragile, manually coded scripts that often fail when systems are updated. This level of automation ensures consistency and reliability.

Take the example of Peter MacDonald, a Senior IT Architect at a biotech company. By implementing prebuilt job steps for Informatica Cloud, he reduced daily processing time from 12 hours to just 45 minutes while handling up to 90 million rows of data daily. Similarly, The Retail Equation transitioned from 131 nightly file system processes to just four or five by replacing script-heavy schedulers with an automation platform.

Event-driven orchestration has taken automation a step further. Jobs are now triggered by real-world events - like file arrivals or system alerts - eliminating downtime between dependent tasks. Automated validation and testing are also embedded into pipelines, catching data quality issues before they affect dashboards. Reflecting the growing reliance on such systems, the global warehouse automation market is projected to expand from $26.5 billion in 2024, with an annual growth rate of nearly 16% through 2034.

"The strongest business case for DWA is strategic. It is more about doing the right work consistently at a scale that manual approaches cannot sustain."

– Rostyslav Fedynyshyn, Head of Data and Analytics Practice, N-iX

Dashboards with Drill-Down Capabilities

An effective operational dashboard typically focuses on 5 to 7 key metrics. However, its true power lies in the ability to drill down from these high-level indicators to detailed, transaction-level data. For example, if a manager notices a surge in customer complaints, they can drill down to examine ticket IDs, timestamps, and the involved support agents. This level of granularity allows teams to respond quickly to emerging issues.

Drill-down capabilities, combined with clear data lineage, empower teams to trace metrics back to their source. This transparency encourages accountability and reduces the time analysts spend reconciling conflicting reports - a common issue known as the "reconciliation tax". When metrics are reproducible and auditable, teams are more confident in their decisions.

Modern tools enhance this process further by incorporating semantic layers that define metrics in code. This prevents inconsistencies in KPI definitions across teams. Automated quality checks also play a crucial role, blocking incorrect data from reaching dashboards and maintaining user trust.

"If a metric cannot be reproduced from a logged pipeline run, it is not decision-grade."

– Alex Yudin, Head of Data Engineering, GroupBWT

Data Storytelling and Transparency

Numbers alone can be misleading without proper context. Data storytelling adds depth to operational reports by providing benchmarks, historical comparisons, and concise explanations. For instance, instead of simply reporting a decline in sales, a well-crafted report might highlight seasonal trends and recommend actions like adjusting inventory.

Transparency is equally important. When data origins are unclear or governance is weak, it can lead to distrust in analytics. Shockingly, around 90% of businesses fail to maximize the value of their systems due to poor reporting practices. To counter this, reports should use clear visualizations to highlight anomalies and maintain a consistent, clean design.

"Don't just present data; provide context and suggest actions based on the insights."

– Julian Alvarado, Content Lead, Coefficient

Operational reports should align with business objectives. Reviewing the company’s strategic plan can help identify which metrics matter most. Tailor reports for the audience - frontline staff may need detailed data, while executives often prefer high-level summaries. Regular audits also ensure data accuracy over time.

Conclusion

Operational reporting in data warehouses has become a game-changer for businesses. By acting on data as it changes - spotting problems and enabling quick decisions - companies can avoid costly mistakes and seize opportunities in real time. The challenge? Traditional analytical warehouses, designed for batch processing and historical analysis, simply can't keep up with the fast-paced demands of modern operations.

That's where modern operational data warehouses (ODWs) come in. These systems provide continuously updated analytics, eliminating the need for outdated batch processing. With the ability to combine streaming data and standard SQL access, teams can access up-to-the-minute information without relying on fragile, overly complex solutions. This blend of real-time insights and straightforward SQL access is transforming how industries operate.

"Businesses will find that operational workloads are more valuable the fresher the data is; they cannot afford slow, stale, or incorrect data."

– Andrew Yeung, Sr. Director of Product Marketing, Materialize

The benefits of these systems go beyond immediate efficiency. They pave the way for predictive strategies that use both historical and live data to anticipate and address issues before they escalate. This means frontline teams get detailed, actionable insights to solve problems quickly, while automated workflows minimize errors and ensure consistent performance across the board.

As operational reporting evolves toward predictive intelligence, businesses equipped with these tools can adapt faster to changes in the market and meet customer needs head-on. Success lies in selecting the right infrastructure, automating wherever possible, and focusing on the metrics that directly impact daily operations.

FAQs

When should I use an ODS vs an ODW?

An Operational Data Store (ODS) is perfect for real-time or near real-time reporting. It provides frequently updated current data, making it ideal for tracking business metrics and supporting quick decision-making. On the other hand, an Operational Data Warehouse (ODW) is better suited for strategic analysis and long-term trend reporting. It consolidates historical data, updates less often (like daily or weekly), and involves more complex data transformations.

How real-time is “real-time” reporting in practice?

Real-time reporting isn't a one-size-fits-all concept. In some cases, systems refresh data in mere seconds or even milliseconds - think financial trading platforms or e-commerce systems where every moment counts. On the other hand, operational BI dashboards might update every few minutes or even hours, depending on the use case.

The challenge lies in achieving true real-time reporting. This often demands sophisticated technologies like data replication, in-memory processing, or data virtualization. These solutions can be complex and resource-intensive, making real-time implementation tricky for many applications.

What’s the easiest way to add CDC and streaming safely?

The simplest and most reliable way to implement CDC (Change Data Capture) and streaming is by leveraging managed tools designed to streamline the process and maintain data accuracy. Solutions like Streamkap, Debezium, and Fivetran work by tapping into transaction logs (such as WAL, binlog, or oplog) to efficiently capture changes and stream them into data warehouses or lakes. These tools help minimize complexity while ensuring data consistency and preventing loss.